21,666 Hours of Exposed Credentials: Every Single Day

Your AI agents are holding credentials they don’t need, for tasks they’ve already finished, and nobody can tell which one did what.

Here’s a number that should keep you up at night.

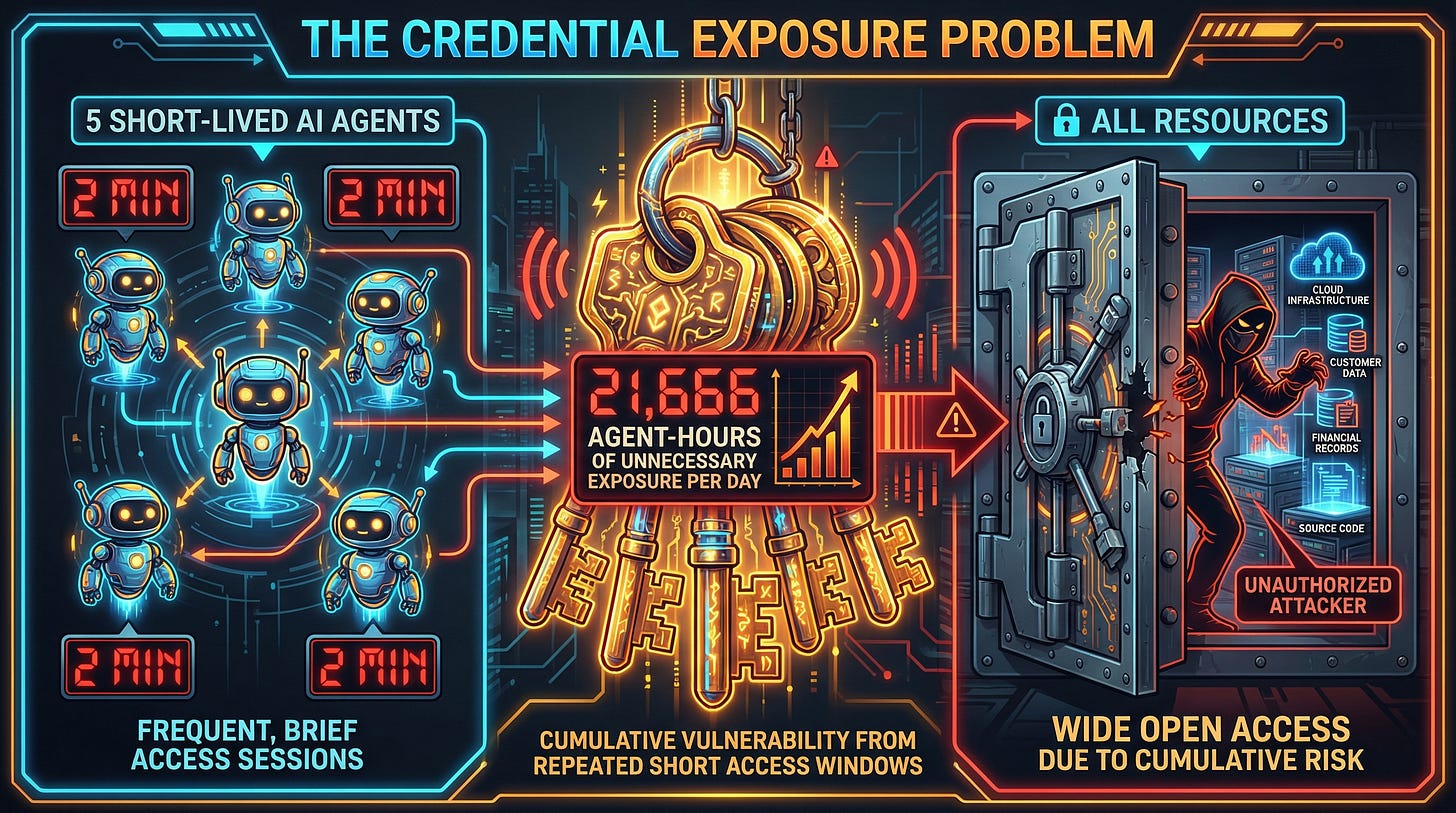

100 AI agents. Each finishes its task in 2 minutes. Each holds a 15-minute OAuth token. That’s 13 minutes of live credentials sitting on an agent that’s already done working. Multiply that across a thousand daily cycles.

21,666 agent-hours of unnecessary credential exposure. Every single day.

Not because anyone was careless. Because the tools we’ve trusted for 15 years Okta, AWS IAM, OAuth were never designed for what AI agents actually do.

I help write the security standards that govern AI systems in the cloud I’m a contributor to the CSA AI Controls Matrix. I’ve been in the rooms where these architecture decisions get made. And over and over, I keep hearing the same answer to the agent identity question.

“We’ll use Okta.”

Or: “We’ll treat it like a service account.”

I’ve been in this space long enough to know what that means. It means nobody’s actually thought about it yet. They’re taking a pattern built for humans and microservices and pasting it onto something that behaves completely differently.

So I started writing. And then I started building.

The Master Key Nobody Talks About

You wouldn’t give a temp contractor a master key to every unit in a building one that works forever. You’d give them a key to one apartment, for one day, and take it back when they’re done.

That’s not what we do with AI agents. We give them shared service accounts. Broad API keys. OAuth tokens that outlive the task by 10x. And then when something goes wrong and it will we can’t answer the three questions that matter most during an incident:

Which agent accessed that data? Was it authorized for that task? Can I shut it down right now?

The answer, in almost every deployment I’ve reviewed, is: we don’t know.

That’s not just a security problem. It’s a compliance problem. It’s the question your auditor will ask after a breach. It’s the answer your CISO will have to give the board. And right now, most teams building with AI agents can’t answer it.

Why the Old Tools Break

This isn’t a configuration problem. It’s not something you can fix by tweaking your Okta policies or writing better IAM roles. The foundational assumptions behind these tools break completely when you apply them to agents.

We know what the workload is. You can name a microservice. You can point to it. Agent instances are ephemeral 500 of them might share one IAM role. When something suspicious hits the logs, you can’t tell which one did it. It’s like having 500 employees badge into a building with the same ID card.

We can predict what it will do. You can audit a microservice’s code path. An LLM makes runtime decisions. A prompt injection could steer it somewhere it was never meant to go and if it has the permissions, nothing stops it. Imagine a contractor who follows their own judgment about which doors to open, instead of the list you gave them.

Permissions are defined at deploy time. Agents need different permissions for every task. The agent handling ticket #789 should only see Customer #12345 not every customer in the database. Traditional IAM has no concept of “this credential is only valid for this specific task.”

Humans are in the loop. Agents operate autonomously. By the time someone reviews the logs, the damage is done. The alarm goes off after the building is empty.

Workloads don’t need to verify each other. In multi-agent systems, Agent B needs to know Agent A is actually Agent A not a rogue process claiming to be it. Traditional IAM gives you nothing here. It’s like two delivery drivers showing up at your door and you have no way to check if either of them actually works for the company they say they do.

The Pattern

So I wrote one.

Not a product (Yet) …. a pattern.

Technology-agnostic. Something any team could implement with whatever stack they’re already running.

I called it Ephemeral Agent Credentialing. Six components:

Ephemeral Identity - every agent instance gets a unique cryptographic identity at spawn. Not a shared account. A unique ID tied to that instance, that task, that orchestration. Think of it as issuing a new employee badge for every single shift one that has the worker’s name, their assignment, and a timestamp on it.

Task-Scoped Tokens - this is the one that changes everything. Instead of giving an agent broad access to “read all customers,” the token says read:Customer:12345. Just that customer. Just for that task. And the token lives for 5 minutes, not 15. If you’re helping Customer #12345 with a support ticket, you have no business reading Customer #67890’s records. The credential enforces that.

Zero-Trust Enforcement - every request validated. Signature, expiration, scope, revocation status. Every single time. No “trusted network” shortcuts. No cached approvals.

Automatic Expiration & Revocation - credentials die with the task. Anomaly detected? Immediate revocation. Not “wait 14 minutes for the token to expire.” The key gets taken back the moment the job is done or the moment something looks wrong.

Immutable Audit Logging - every action traced to a specific agent instance, task, and timestamp. Real attribution. When the auditor asks “which agent accessed that data at 2:47 AM,” you have an answer.

Mutual Authentication - when agents talk to each other, both sides verify identity. No impersonation. Both delivery drivers check each other’s badges before exchanging packages.

Together, this reduces credential exposure by 10-50x, contains blast radius, and gives you real accountability.

What it doesn’t do: prevent prompt injection, filter content, or sandbox agent runtimes. Those need their own solutions. Guardrails tell the agent what it shouldn’t do. Ephemeral credentialing limits what it can do regardless of what it tries. That’s an important distinction. They’re complementary, not competing.

This Was Version 1.0

That was October 2025. Six components on paper. A pattern with no scars.

Then people started asking hard questions. And the real world answered some of them for me.

In Part 2, I’ll show what happened when the pattern collided with a real CVE (CVSS 9.3), four different standards bodies publishing findings that validated the same problem, and the hardest component I hadn’t fully solved yet what happens when agents delegate work to other agents and you need to prove the chain of authority is legitimate.

If you’re building with AI agents and wrestling with the identity question or if you’ve been told “just use Okta” and something felt off about that answer I’d love to hear how you’re thinking about it.

This is a 15-part series about building the solution in public.

The pattern is open (CC BY-SA 4.0). The conversation should be too.