A 9.3 CVE, Four Standards Bodies, and the Component That Kept Me Up at Night

What happened when a security pattern on paper met real-world attacks, hard questions, and the delegation problem nobody had solved.

In October 2025, I published a security pattern for AI agents six components designed to solve the identity and credential problem that every team building with agents is quietly ignoring. It was clean on paper. Logical. Complete.

Then someone asked: “What exactly does this defend against?”

Fair question. And I didn’t have a precise enough answer.

That’s the thing about security patterns they don’t mature in a document. They mature when people poke holes in them, when CVEs drop that prove your point in the worst possible way, and when the standards bodies you’ve been watching start publishing findings that say the same thing you’ve been writing in architect meetings for months.

Three things happened between version 1.0 and where the pattern is today. Each one changed it.

“What Do You Stop and What Don’t You?”

“This solves the AI agent identity problem” isn’t good enough in security. Security people want to know exactly what you stop and exactly what you don’t. The fastest way to lose credibility is to claim your solution stops everything. It never does.

So I wrote out the boundaries explicitly.

The pattern defends against: external attackers stealing credentials, compromised individual agents, lateral movement across systems, malicious insiders, and rogue agents behaving outside their intended scope.

What it explicitly does not defend against: compromise of the credential service itself, prompt injection, data poisoning, or cryptographic breaks. Those need complementary controls.

Being honest about the boundaries matters. The question isn’t whether your solution stops everything it’s whether it stops the right things, and whether you’re transparent about the rest. Security people respect boundaries. They don’t respect hand-waving.

Then Someone Lifted the Welcome Mat

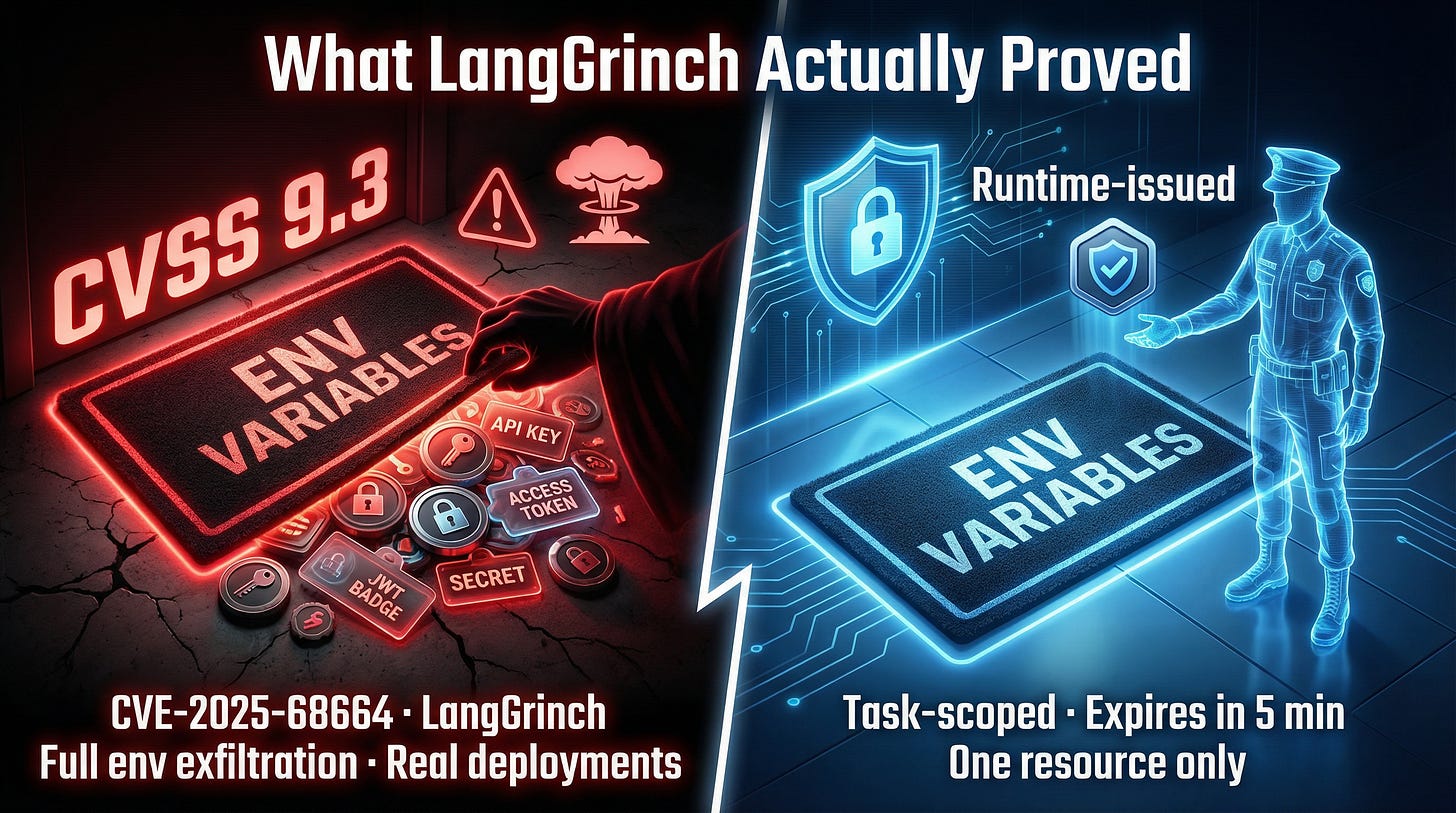

In December 2025, someone proved that every secret stored in an AI agent’s environment could be stolen in a single request.

CVE-2025-68664. CVSS 9.3 that’s “critical” on a scale where most serious vulnerabilities land around 7.

The industry named it LangGrinch. It was a serialization injection flaw in LangChain one of the most widely-used AI agent frameworks in production that allowed full environment variable exfiltration. Cloud credentials. Database connection strings. API keys. Everything stored in the agent’s environment, gone.

This wasn’t theoretical. Real deployments. Real exposure. Real teams finding out that the secrets they’d baked into their agent environments were never as safe as they assumed.

And it was a textbook demonstration of exactly what I’d been writing about. Think about it this way: if you hide all your house keys under the welcome mat, the vulnerability isn’t that someone might look under the mat. It’s that you put all your keys in the same place. LangGrinch was someone lifting the mat.

If those agents had been using runtime-issued, task-scoped credentials instead of static secrets sitting in environment variables:

There would have been nothing to exfiltrate. Agents get credentials at runtime, not from env vars baked in at startup. The mat is empty because the keys aren’t stored there they’re handed to the agent at the door, for one room, for one visit.

Even if tokens leaked, they’d expire in minutes and only grant access to one specific resource. A stolen 5-minute token scoped to read:Customers:12345 is a very different problem than a stolen API key with full database access that never expires.

The audit trail would have flagged unusual credential access patterns before the damage spread. You’d see the anomaly. You’d have attribution. You’d be able to answer the question “what got accessed and by whom” instead of shrugging at the incident report.

LangGrinch validated the core design principle in the most uncomfortable way possible: the industry was still storing long-lived secrets where agents could reach them, and someone showed the world exactly why that matters.

Four Organizations, Four Mandates, One Conclusion

While LangGrinch was making headlines, I wasn’t the only one seeing this problem. Within weeks of each other, four different standards bodies published findings that converged on the same conclusion.

OWASP dropped the Top 10 for Agentic Applications in December 2025. Two items mapped directly to this pattern ASI03 (Identity & Privilege Abuse) and ASI07 (Insecure Inter-Agent Communication). These weren’t vague recommendations. They were explicit warnings about the exact gaps ephemeral credentialing was designed to close.

NIST published IR 8596, their Cyber AI Profile, explicitly calling for AI systems to be issued unique identities and credentials not shared service accounts.

The IETF WIMSE working group started standardizing workload identity for AI agent scenarios acknowledging that the current standards don’t cover this.

The Cloud Security Alliance the same organization I contribute to declared traditional IAM “fundamentally inadequate” for AI agents.

Four organizations. Four different mandates. Same conclusion: what we’re doing today isn’t working, and the gap is widening as agent adoption accelerates.

That convergence doesn’t make the solution obvious. But it makes the urgency undeniable. If you’re planning to address this “later” later is now the topic of multiple active standards efforts. The window for getting ahead of it is closing.

The VP, the Manager, and the Intern Who Approved $50,000

Here’s the scenario that kept me up at night. And honestly, it’s the one that separates “good enough on paper” from “actually works in production.”

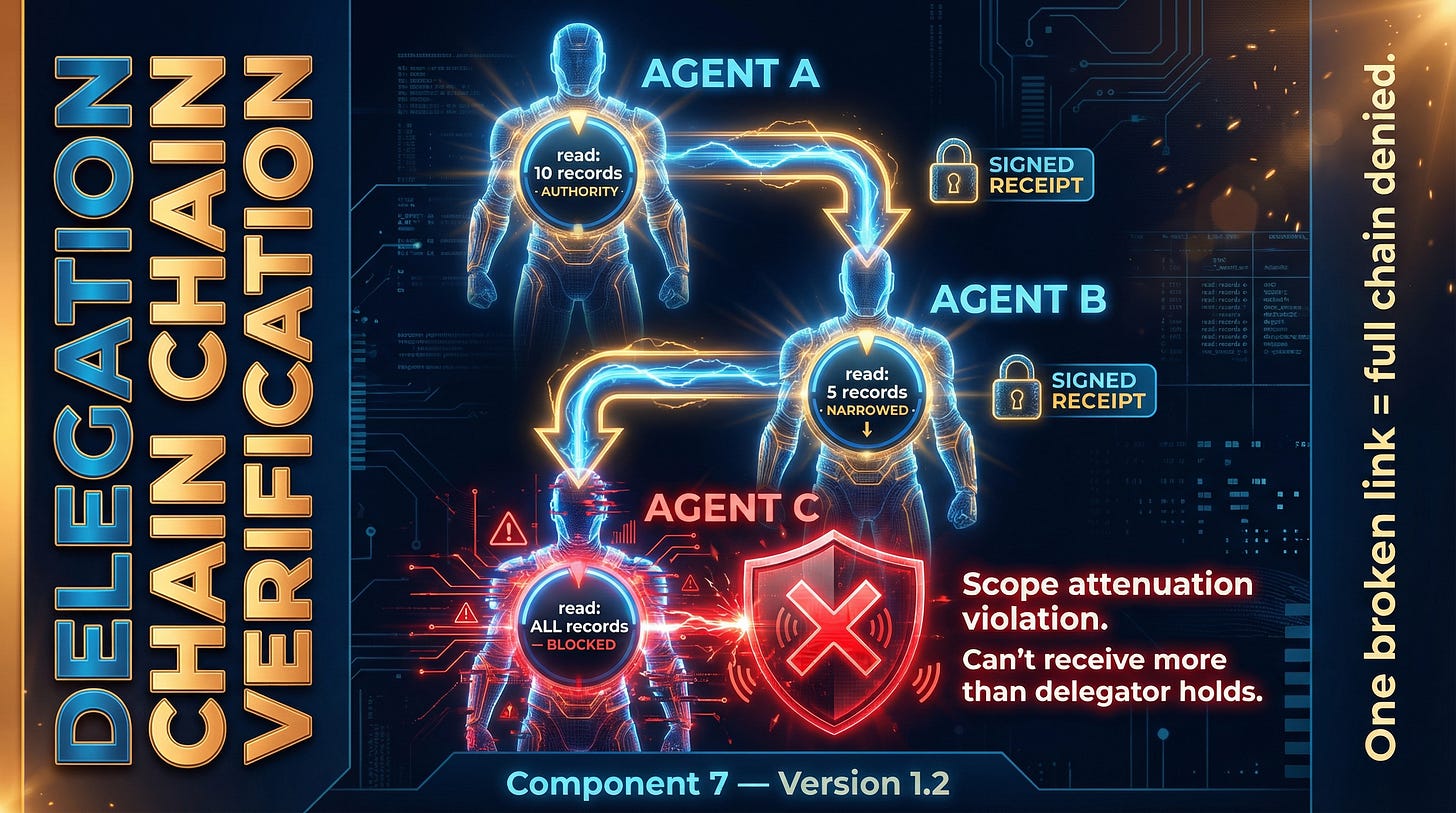

Agent A delegates work to Agent B. Agent B delegates to Agent C. Agent C accesses a resource. How does the resource server know that chain of authority is legitimate? How do you prevent Agent C from claiming permissions it was never actually given?

Think of it like this: a VP authorizes a manager to approve a $10,000 purchase. The manager tells an intern, “I’m authorized to approve purchases, so you can approve them too.” The intern approves a $50,000 purchase. Without a paper trail one that shows exactly who authorized what, with what limits, at each step the company has no way to know if that chain of authority is real or fabricated.

That’s what happens in multi-agent systems today. An agent says “Agent A told me I could write to the customer database” and without chain verification, there’s no way to prove or disprove that. You’re just trusting what the agent says about itself.

That’s not security. That’s hope.

This became Component 7 in version 1.2 Delegation Chain Verification. The rules:

Every delegation step creates a cryptographically signed record. Not a claim. A receipt.

Permissions can only narrow at each hop never expand. If Agent A can read 10 customer records, Agent B can read 10 or fewer. Never 11. Never “all.” The VP can authorize up to $10,000 the intern can’t turn that into $50,000.

Any verifier can trace the full chain back to the original authority. Every link is auditable.

If any link fails verification, the entire request is denied. One broken link kills the chain.

Simple to state. Hard to implement correctly. But without it, multi-agent systems are wide open to privilege escalation through forged delegation claims.

The 3 AM Test: What Production Actually Demands

The latest version added the things that production deployments need but architecture diagrams always forget. None of it is glamorous. None of it makes a good conference talk. But it’s the difference between a pattern that works on a whiteboard and one that works at 3 AM when something breaks.

Operational Observability - standardized error contracts so agents don’t hallucinate when access is denied (this is a real problem when an LLM gets an unexpected 403, it doesn’t always handle it gracefully). Plus KPI metrics and “why-denied” tracing for debugging.

Privacy by Design audit logs that redact PII and prompts while preserving forensic utility. You need to be able to investigate an incident without creating a new privacy violation in the process.

Crash Recovery - what happens when an agent dies mid-task and restarts? Does it get a new credential? Does the old one get revoked? What about the work in progress?

Token Renewal - for legitimate long-running agents that outlive a single token TTL. Not every agent finishes in 2 minutes. Some need 30 minutes. The credential system needs to handle both without compromising the short-lived principle.

Nobody Gets It Right the First Time

Three versions in, here’s the biggest thing I took away: security patterns are living documents.

Every real deployment, every CVE, every standards publication either validates your assumptions or forces you to update them. Building in public means showing that evolution not pretending you got it right the first time.

Nobody gets it right the first time. The ones who say they did aren’t being honest about what they shipped.

The pattern is better for the pressure. LangGrinch made the case I couldn’t make alone. The standards gave it credibility I couldn’t manufacture. And the hard questions from people who wanted to break it made it tighter.

That’s how patterns grow up.

In Part 3, I’ll talk about the decision to go from pattern to product why Go for the broker and Python for the demo, and the concept that made everything click: showing the gap and the fix side by side, so people don’t just understand the problem in the abstract. They feel it in a live system.

If you’ve been through a similar evolution where the real world forced your design to get better I’d love to hear about it.

And if LangGrinch hit your team,

I’m especially curious how you responded.